Nimble: Efficiently Compiling Dynamic Neural Networks for Model Inference

Haichen Shen, Jared Roesch, Zhi Chen, Wei Chen, Yong Wu, Mu Li, Vin Sharma, Zachary Tatlock, Yida Wang

Conference on Machine Learning and Systems (MLSys) 2021

Abstract

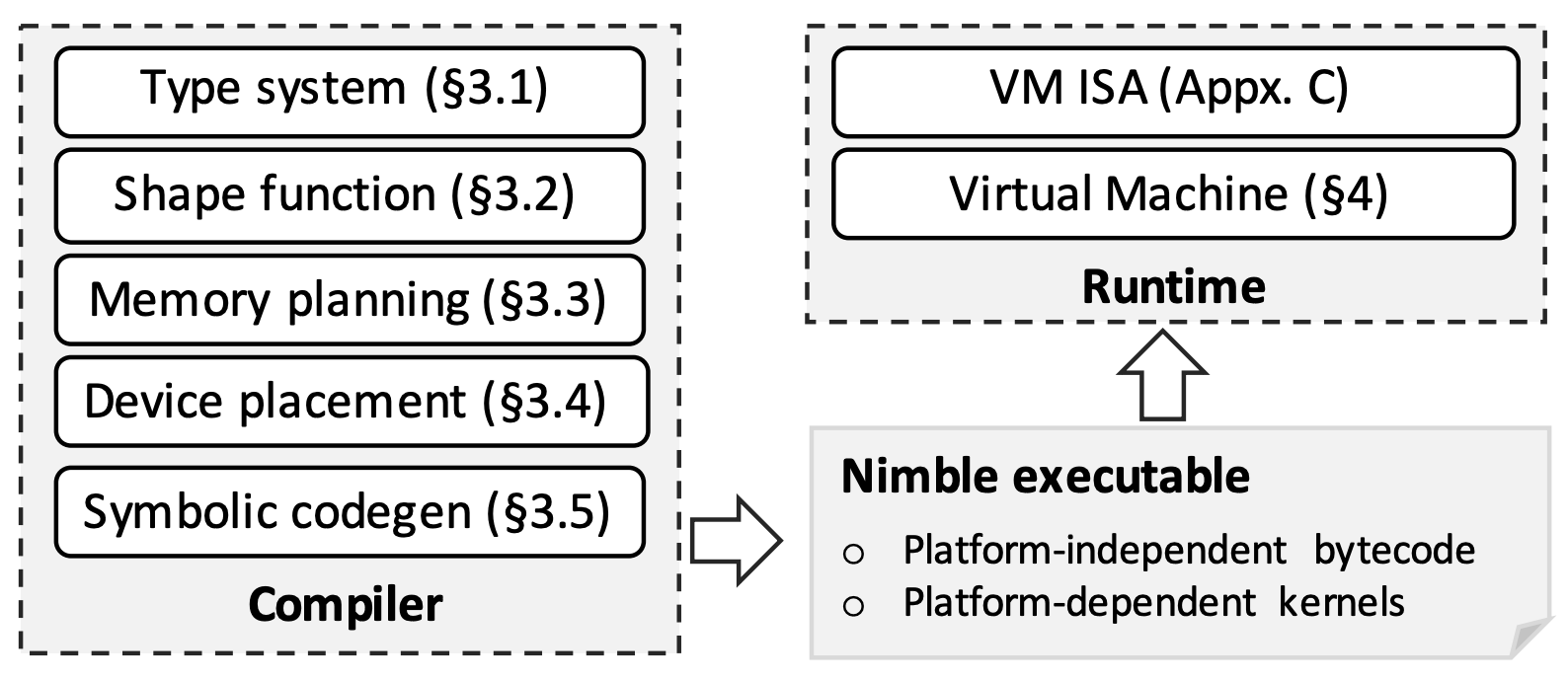

Modern deep neural networks increasingly make use of features such as control flow, dynamic data structures, and dynamic tensor shapes. Existing deep learning systems focus on optimizing and executing static neural networks which assume a pre-determined model architecture and input data shapes—assumptions that are violated by dynamic neural networks. Therefore, executing dynamic models with deep learning systems is currently both inflexible and sub-optimal, if not impossible. Optimizing dynamic neural networks is more challenging than static neural networks; optimizations must consider all possible execution paths and tensor shapes. This paper proposes Nimble, a high-performance and flexible system to optimize, compile, and execute dynamic neural networks on multiple platforms. Nimble handles model dynamism by introducing a dynamic type system, a set of dynamism-oriented optimizations, and a light-weight virtual machine runtime. Our evaluation demonstrates that Nimble outperforms existing solutions for dynamic neural networks by up to 20x on hardware platforms including Intel CPUs, ARM CPUs, and Nvidia GPUs.

BibTeX

@inproceedings{2021-mlsys-nimble,

title = {Nimble: Efficiently Compiling Dynamic Neural Networks for Model Inference},

author = {Shen, Haichen and Roesch, Jared and Chen, Zhi and Chen, Wei and Wu, Yong and Li, Mu and Sharma, Vin and Tatlock, Zachary and Wang, Yida},

booktitle = {Proceedings of Machine Learning and Systems},

date = {2021},

}